This is part of The New Cost of Everything, a five-part series on what AI is actually changing. Post 1 has the foundational framework.

I subscribe to a newsletter called The Rundown AI. It covers major AI announcements — new models, product launches, capability updates — five days a week. On a typical Tuesday: Zuckerberg’s BioHub announcement, a Mayo Clinic cancer detection study, food AI tools, four new product releases across three providers. Normal volume for a weekday.

It is still overwhelming but also fascinating. I (try to) make time for it, but it’s easy to fall behind within days or even hours. I would guess most people reading this post feel the same way. We don’t feel like we can take PTO for a week, let alone two, three, or four weeks.

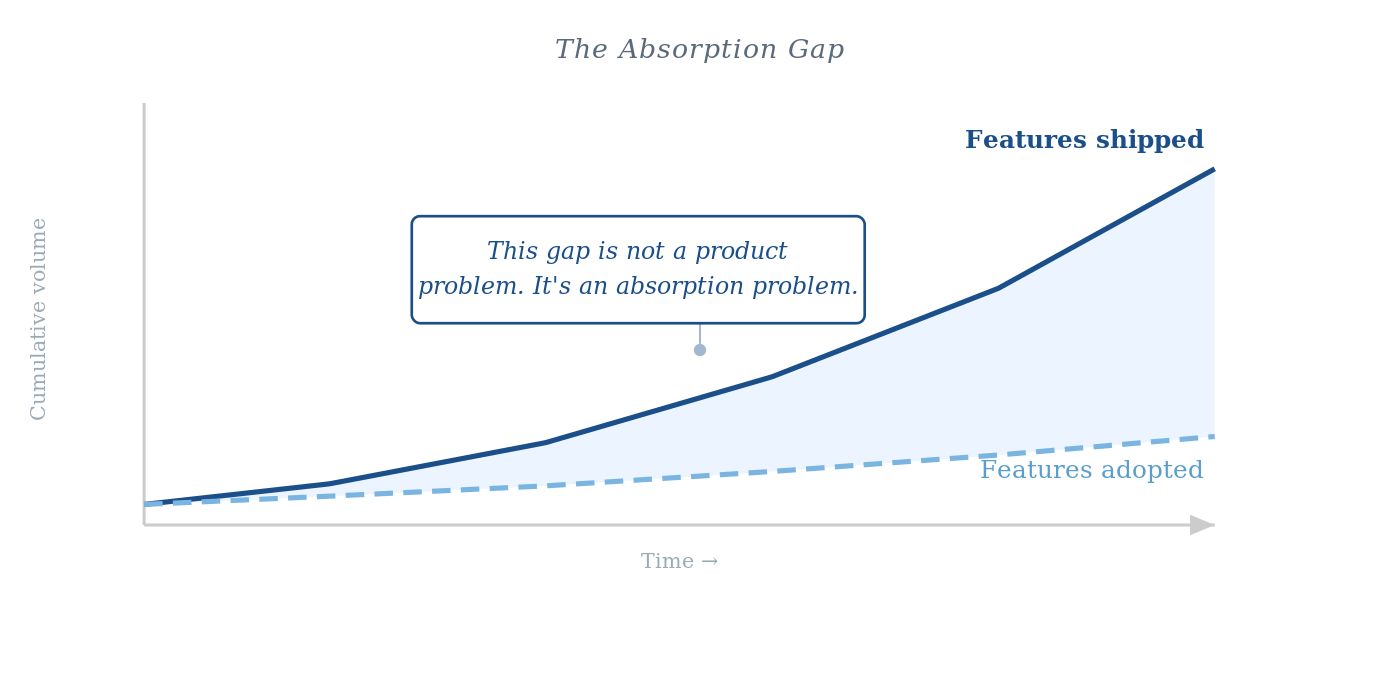

That’s the problem I want to talk about. Not that AI is moving fast; we know that. The problem is the gap between how fast vendors can ship code and how fast a customer can actually derive value from it.

I think of this as the Absorption Gap.

Speedwalking

I picked a few weeks at random to see how much change really happened. For the week of February 10–14, 2026, here’s what shipped in five business days across OpenAI and Anthropic:

(1) OpenAI enhanced Deep Research with MCP server connectivity, the ability to restrict searches to trusted domains, progress tracking, and mid-research interruption.1 (2) ChatGPT raised per-message file attachments from ten to twenty with expanded file type support. (3) Interactive Code Blocks launched with inline editing, chart previews, and split review mode. (4) Context windows for Thinking mode expanded to 256,000 tokens. (5) Claude Code released multiple agentic capability updates in the same window.

Five distinct capability changes. Five business days. Two providers.

The week of March 10–17 had the same volume. (1) ChatGPT launched interactive learning modules for seventy-plus math and science topics. (2) OpenAI simplified its model selector. (3) Microsoft announced Claude availability inside Microsoft 365 Copilot. (4) Anthropic web search opened to free users. (5) Claude Code continued shipping all sorts of features.2

Pick any single-week window in the past eighteen months. It’s a lot.

The Cost of Speed

In the “Old World”, shipping was expensive. You needed a small army of engineers, rigorous QA, and months of planning. Because code was expensive, we were disciplined about what we built. We had to be.

In the “New World,” code is now cheap. AI-assisted development has collapsed the cost of creation, and companies are shipping more features, more often, than at any point in the industry’s history. But just because you can ship a feature on Tuesday morning doesn’t mean your customer is ready to use it Tuesday afternoon.

When the rate of change exceeds a person’s ability to absorb it, the product starts to feel like noise. Predictability about how something works is undervalued. You’re adding value to the codebase while the perceived value of the product decreases — because the customer feels constantly behind. They aren’t using 90% of what they’re paying for, and the remaining 10% keeps changing under their feet.

That’s the problem: more shipping, less adoption.

Sorting Mechanisms

In the Crossing the Chasm framework, adoption moves across five segments: innovators, early adopters, early majority, late majority, laggards. Innovators and early adopters tolerate rough edges, seek out new capabilities, and will spend a Saturday catching up on what they missed. The early majority won’t.

The people reading this post are, by definition, innovators and early adopters. We track releases. We have opinions about model differences. We treat a feature drop as learning, not labor. Opportunity, not drudgery. We are not representative of the users AI companies need to reach if they want to cross the chasm.

When a mainstream user comes back from a week of PTO to find that the tool they use every day has five new major capabilities, do they feel informed or behind? Consistently feeling behind wears a person down. The gap between what the product can do and what the user actually uses compounds over time as they fall “further behind.”

Amazon’s self-publishing tools made it dramatically cheaper and easier to publish a book. The result wasn’t that everyone read more books. More books were published, a handful became bestsellers read by millions, and millions of others were read by almost no one. The market needed sorting mechanisms: bestseller lists, recommendation algorithms, curated bundles. The sheer volume exceeded anyone’s capacity to evaluate independently.

AI software is heading in the same direction. More features doesn’t mean more usage. It means the sorting problem gets harder. It means we will need a sorting mechanism, a way to filter.

Festina Lente

Caesar Augustus’s standing instruction to his generals was festina lente: make haste slowly. It’s usually read as a case for patience. That’s partly true.

The competitive pressure in AI right now makes slower shipping almost impossible. Companies that pause to let customers catch up will fall behind companies that don’t. The argument isn’t to slow down. It’s narrower: shipping speed and absorption quality are not the same investment.

A changelog is not adoption scaffolding. A release note is not an explanation of why a capability matters to someone who wasn’t waiting for it. The phenomenal YouTube tutorial ecosystem — largely built by independent creators, not the companies themselves — is currently doing more for mainstream AI adoption than most official product communication (I may be biased). That’s not a compliment to YouTube. It’s an observation about a gap the companies haven’t filled.

From Shipping Features to Successful Outcomes

Automation handles FAQ volume. It handles onboarding basics. It handles “how do I do X” questions that have deterministic answers. What it doesn’t handle is the judgment-heavy question that early majority users actually have: “of everything this product can now do, what should I actually use, given my specific situation?” It does not handle the implementations, the “I think I need to connect this to all my systems and, I think, I’m supposed to redo all my workflows, right?”

Those questions get harder to answer as product complexity compounds. TSIA’s State of Customer Success 2026 report describes exactly this bifurcation: AI and digital motions handle routine interactions and smaller accounts, while strategic advisors take on the high-complexity, high-stakes work.3 The bifurcation is already underway.

Thus, the popularity of Customer Success, Forward Deployed Engineers, Professional Services. What matters is the mandate: someone must close the Absorption Gap. That means translating the changelog into a business decision, identifying which new features are relevant to this customer’s workflow, and converting complexity into confident usage. A poorly run motion, or one stripped down in the name of AI efficiency, leaves that question unanswered. The gap widens not because the product failed but because the customer couldn’t absorb what it offered.

I wrote about this from a different angle in The FDE Ships the Product — Someone Must Own the Outcome. The Forward Deployed Engineer builds the bridge. Someone still has to make sure the customer reaches the other side.

The companies that think AI solves the absorption problem are the ones that will lose the early majority. Not to a competitor. To inertia.

The Human Premium

Ironically, as code becomes cheaper, human judgment becomes more expensive. In a world of infinite features, the person who can tell me which three features will actually move my North Star metric is the most valuable person in the room.

That’s why I think we’ve seen a rise in technical CSMs and FDEs because these groups are tasked not just with adoption, but workflow penetration. The products that win the mainstream won’t necessarily ship the most features. They’ll be the ones that make it possible for ordinary users to actually use what’s been built.

Festina lente. Not slower. Smarter.

Next in this series: The Great Bundling — Bundling and unbundling are cyclical. We’re entering a major bundling phase driven by AI, one where the cost of expansion has dropped so dramatically that every company is under pressure to move into adjacencies. This has happened before. The shape is familiar. Only the speed is different.

Notes

- OpenAI product updates, February 2026. Specific features as documented in OpenAI’s release communications during this period. ↩

- Product updates week of March 10–17, 2026. Microsoft 365 Copilot / Claude availability announced March 9, 2026. Specific ChatGPT model selector changes per OpenAI release documentation. ↩

- TSIA, State of Customer Success 2026: Proving Value in the Age of AI Economics. The report identifies a clear bifurcation: digital/AI motions handling routine interactions and smaller accounts; strategic CSMs evolving into value managers and advisors for high-complexity accounts. ↩