This is part of The New Cost of Everything, a five-part series on what AI is actually changing and what it isn’t. Each post stands alone but the argument compounds (like interest) if you read all five. Start at Post 1 if you want the foundational framework.

Putting my old CPA hat on, there’s a concept in corporate finance called the hurdle rate. It’s the minimum return a project has to generate before a company will fund it. Set it at 12%, and a project that returns 11.9% doesn’t get built. Not because it’s a bad idea. Because it doesn’t clear the threshold.

Hurdle rates exist for good reasons. Capital is finite. Every dollar committed to one project is a dollar unavailable for another. The hurdle rate is the mechanism that forces prioritization.

AI doesn’t directly change your hurdle rate. But it changes an input: the cost of execution. And when execution cost drops dramatically, the return profile of the same project changes entirely. Projects that couldn’t clear the bar before now can. Because the math changed.

We risk rejecting good ideas because of formerly foundational math that no longer applies.

The Hurdle Rate and WACC Analogy

In capital budgeting, your hurdle rate is typically set above your weighted average cost of capital (WACC) and a risk premium: the blended cost of debt and equity financing plus an extra percentage for the uncertainty you will take on. Again, if WACC is 12% and the risk premium is 3%, projects need to return at least 15% to be considered for funding. Drop WACC to 7% through lower interest rates or cheaper equity, and projects that couldn’t clear 15% now clear 10%. The investment landscape expands.

AI operates on the execution side of the same equation. It doesn’t lower the cost of capital (we leave bankers to that). AI lowers the cost of building, analyzing, and operating, which changes what a project actually costs to execute, which changes what return it can generate, which changes whether it clears the hurdle. So, perhaps, the minimum hurdle rate decreases and the anticipated return increases.

Projects that were correctly rejected under old cost assumptions deserve a second evaluation under new ones.

Three Eras of the Same Problem

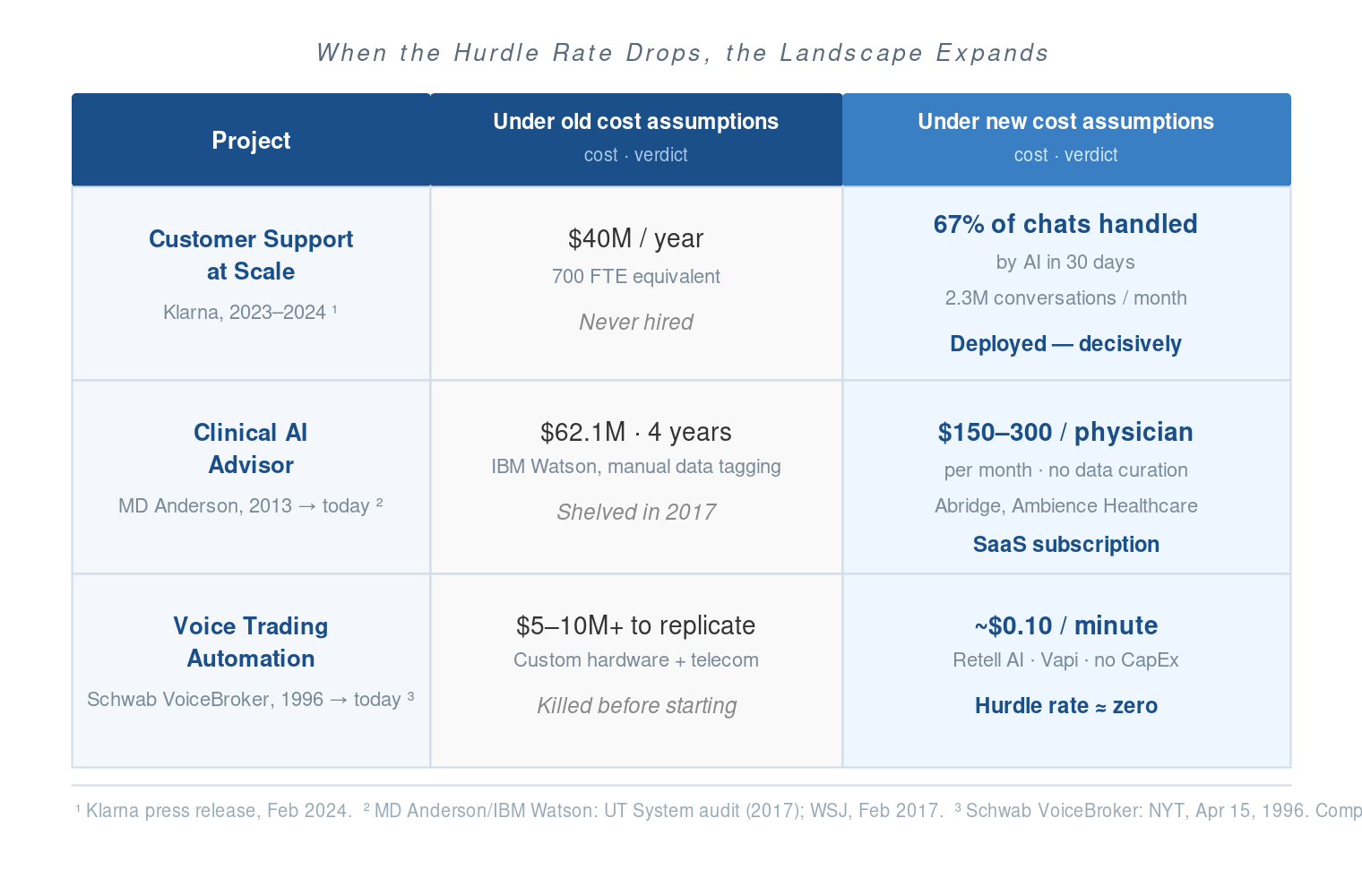

The clearest way to see this is through examples from different time horizons. The pattern repeats.

The recent version (2023–2025). In early 2024, Klarna deployed an AI assistant built on OpenAI. Within 30 days it was handling 67% of all customer service chats (2.3 million conversations a month) doing the work of an estimated 700 full-time agents. The labor equivalent Klarna would have needed to staff that volume: roughly $40 million a year. The AI didn’t replace a marginal process. It replaced a hiring plan that would never have cleared the hurdle rate in the first place.1

The medium-term version (roughly fifteen years back). Between 2013 and 2017, MD Anderson Cancer Center spent $62.1 million building an “Oncology Expert Advisor” with IBM Watson, a system meant to read physician notes, cross-reference medical literature, and recommend cancer treatments. It was shelved in 2017. The primary failure mode wasn’t the idea. It was the cost of feeding it: armies of human experts manually tagging data to train a pre-LLM system that still couldn’t handle clinical edge cases. Today, Abridge and Ambience Healthcare deploy ambient clinical AI that does the same structured documentation work for roughly $150–300 per physician per month, with no manual data curation required. The project MD Anderson spent $62 million on and abandoned is now a SaaS subscription.2

The long version (thirty years back). In 1996, Charles Schwab launched VoiceBroker. This was a natural language phone system that let customers place trades by speaking plain English. Schwab had the capital to build it. Most firms didn’t. Replicating that capability required proprietary speech-recognition hardware, specialized phonetic dictionaries, and custom telecom infrastructure. The estimates that killed similar projects at smaller brokerages and banks typically started at $5–10 million before a single trade was placed. Today, voice AI APIs like Retell AI and Vapi deliver equivalent or superior natural language capability for roughly $0.10 per minute, with no upfront capital expenditure. The project that required Schwab’s balance sheet in 1996 now has a hurdle rate close to zero.3

Three different eras. The same structural story: a project was correctly rejected under the cost assumptions of its time. Those cost assumptions no longer hold.

The Jason Lemkin Reframe

Jason Lemkin at SaaStr has written about what companies would do with unlimited capital.4 The exercise is useful because it forces you to separate the question “is this worth doing?” from the question “can we afford to do it?” Many of us conflate those two questions when we reject a project at the hurdle rate because we are rushing toward a solution. Sometimes a project is correctly killed. Sometimes it’s a good idea that didn’t survive a cost constraint that no longer exists.

You don’t have unlimited capital. But the denominator just changed.

The practical version of Lemkin’s question isn’t “what would you do with unlimited capital?” It’s: which projects in your parking lot were rejected primarily because of execution cost, rather than because they were bad ideas? Those are the ones that deserve re-evaluation. Not all of them will clear the new math. But more of them will than you think.

What to Actually Do With This

This isn’t an argument for abandoning rigor in project evaluation. Conversely, rigor and rejecting ideas will matter more. Hurdle rates exist for good reasons and should stay. The argument is narrower: the inputs to the calculation have changed, which means old calculations are stale.

Before you re-evaluate a shelved project, answer three questions. First: was the primary rejection reason execution cost, or was it strategic misfit, weak demand signal, or poor timing? If the latter, AI doesn’t change the calculus. Second: what specifically drove the cost estimate? Was it headcount, implementation time, tooling? Those are the numbers to re-run. Third: what does the project enable if it succeeds? A project that unlocks a new market or capability has a different return profile than one that improves an existing process.

I ran this exercise against our own project backlog at Mercy Ships last year. One project had been off the table for years: a comprehensive institutional knowledge base covering all 23 Finance Director responsibilities, structured well enough to hand off to an incoming Finance Director with a seven-month gap between us. The scope had always made it a non-starter. Documentation coverage was at 22% when I started. We finished at 100% in three sessions, using voice recording rounds and AI synthesis to capture tacit knowledge that would not otherwise have existed in writing.5 That project didn’t clear a lower hurdle rate. It cleared a hurdle that was too high before.

The greenfield tier is where this matters most. Projects shelved because they were structurally impossible — not just expensive — are the ones most likely to look different now. The execution path changed our ability to overcome the hurdle. For instance, this also allowed me to create our first ever Economic Impact Analysis for the organization so we better understand our own impact for the countries we serve, and to help our host countries know how we are helping beyond the free surgeries and healthcare training.

Pull your parking lot. Run the numbers again.

Next in this series: Measure Once, Cut Twice?. Companies are restructuring around AI. The ones making the most visible mistakes are treating it as a cost reduction. The ones making the most durable bets are treating it as a repositioning opportunity.

Notes

- Klarna, “Klarna AI assistant handles two-thirds of customer service chats in its first month,” press release, February 2024. ↩

- The MD Anderson/IBM Watson project cost and timeline are documented in a University of Texas System internal audit (2017) and reported by the Wall Street Journal: MD Anderson Benches IBM Watson in Setback for Artificial Intelligence in Medicine, February 2017. The $62.1M figure is from the UT audit. ↩

- Charles Schwab VoiceBroker launch covered by The New York Times: Schwab’s New Voice Broker Is a Natural, April 15, 1996 (may require archive access). The $5–10M infrastructure estimates for competitor projects are drawn from industry reporting of the period on enterprise speech-recognition deployment costs; figures are representative of the range, not attributable to a single named firm. ↩

- Jason Lemkin, “Imagine a World With Unlimited Capital,” SaaStr. ↩

- My preferred capture method: a long walk with voice recording. I dictate thoughts, reflections, concerns, and opportunities in long form, then feed the transcript back to AI for synthesis. It sharpens the thinking, gets me out of the house, and is a reliable source of mid-day vitamin D. ↩