A Practitioner’s Guide to VSM and VSA in Knowledge Work

Eleven months after launching a new expense approval system, I discovered that Stage 2 — the gap between Finance approval and Payables processing — was eating more than half of total cycle time. The bottleneck wasn’t the approval chain. It was the queue after approval. Value Stream Mapping (VSM) made that visible in a way that looking at total turnaround time never would have.

That finding came from applying VSM to a finance workflow — something I’d never done before. It took an afternoon with AI. It would have taken weeks without it, if I’d attempted it at all.

This is the second of two posts. The first covers how we built the system that generated the data. This one is about what we found when we finally looked at it — and the methodology that made it possible.

A Little Context

I currently serve as Finance Director on the Global Mercy, a hospital ship operated by Mercy Ships, docked in Freetown, Sierra Leone. Our five-person team processes expense requests from across the ship — reimbursements, petty cash advances, cash requests from departments.

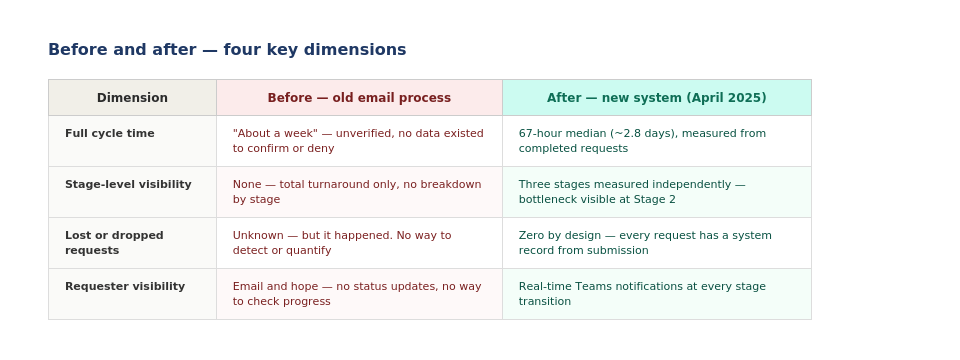

In April 2025, we replaced a broken email-based process with a system built on Microsoft 365: Forms for submission, SharePoint as the system of record, Power Automate for routing, and Teams for approvals. The most important thing that system did, beyond fixing the workflow, was generate timestamped data at every stage. That data is what made the analysis below possible.

The build story is covered in full in the companion post. The short version: we went from zero tracking to a full audit trail in a few months. Then we waited to see what the data would tell us.

What VSM and VSA Actually Are

Before getting into the findings, it’s worth naming the methodology — because both terms get used loosely (and it took me a long time to understand that they are not synonyms).

VSM — Value Stream Mapping — is a Lean methodology that maps every step in a process from request to fulfillment, identifying where value is created, where it is delayed, and where waste occurs. It produces two maps: a current state (what actually happens) and a future state (what should happen after improvement).

VSA — Value Stream Analysis — is the diagnostic layer that sits on top of the map. It quantifies where time is lost and ranks which improvements will have the most leverage. VSM shows you the shape of the process. VSA tells you where to intervene first.

Together, they are a map and a prioritized action list. Used separately, you get either a pretty diagram that sits on the shelf, or a data set without a powerful interpretive frame.

| 💡 Why this matters for knowledge work VSM originated in manufacturing. But the underlying problem — most of the elapsed time in a process is waiting, not working — is universal. It applies to software delivery, healthcare, financial services, and operations. The methodology transfers. What has changed recently is accessibility: AI now makes VSM and VSA available to small teams without dedicated Lean practitioners. |

How AI Changed What Was Possible

I had read about VSM for years and used it lightly at GitLab. I had never applied it systematically because it requires time and Lean expertise I didn’t have. The Yellow Belt certification helped with the theory. The application was still a different problem.

Working with Claude, I was able to:

- Export the redacted SharePoint dataset and run a structured cycle time analysis broken down by stage, by month, and by quarter.

- Map the current-state workflow using VSM methodology — swim lanes, handoff points, waste taxonomy — at a level of rigor I could not have produced alone. At best, eight weeks of work. Done in an afternoon (roughly 4 hours vs. 320 hours).

- Identify specific, actionable improvement opportunities: not just ‘this stage is slow’ but ‘here is the statistical distribution, here is the likely cause, and here is where to intervene.’

| 💡 What this means for any operations team The value is not just efficiency. AI enabled me to apply methodologies and frameworks outside my native expertise, at a level of rigor that produced genuinely useful output. AI made them accessible to a five-person team on a hospital ship with no dedicated analytics resource. The same dynamic applies to any GTM Ops team without a full-time data analyst. |

What the Data Showed

The dataset covers requests submitted between April 2025 and March 2026.

The first thing VSM revealed: we had been looking at total turnaround time. VSM forced a stage-by-stage view, and the picture was entirely different:

| Stage | What it measures | Median time | Key finding |

| Stage 1 | Submission → Finance approval | 2.5 hours | Not the bottleneck. Approval chain is working. |

| Stage 2 | Finance approval → Payables processed | 22.5 hours | Primary bottleneck. Over half of total cycle time sits here. |

| Stage 3 | Payables processed → Request completed | 6.8 hours | Secondary delay. Requesters slow to collect. |

Stage 2 accounted for the majority of total cycle time — and it had nothing to do with approval speed. The request was already approved. It was sitting in queue waiting for Payables capacity. That is a scheduling and prioritization problem, not an approval problem. Without the stage-by-stage breakdown, we would have kept trying to speed up approvals and never touched the actual constraint.

VSA surfaced a second finding: the error rate. Roughly 15–20% of submissions contained errors requiring correction — wrong GL codes, missing codes, unreadable receipts. These were being missed in Stage 1 which caused the payables processing time to expand to fix the errors, which inflated the average without showing up in median figures. The fix is at submission, not at approval. The problem wasn’t that payables was slow, it was that I approved requests when they shouldn’t have been! Oops again.

The quarterly trend data surfaced a third: cycle time spiked during my PTO period. The backup Finance Approvers had training and system access — but response times slowed because of time zones. The data made that visible.

None of these findings would have been visible looking at total turnaround time. All three required the stage-by-stage view that VSM imposes.

What VSA Named That We Couldn’t See

Lean Six Sigma identifies eight categories of waste — sometimes called DOWNTIME. Two showed up most clearly in this analysis.

Waiting accounted for the bulk of Stage 2. The process wasn’t broken; it was queued. The difference matters: a broken process needs redesign, a queued process needs scheduling. Treating them the same produces the wrong intervention.

Defects — the error rate in submissions — is the second. Under the old email process, errors were corrected silently and moved on. Under the new system, they became visible. Visibility is the first step. The fix, in this case, is upstream: better form design and GL code guidance at the point of submission, not better error correction at the point of approval.

| 💡 The key insight from VSA Our natural instinct is to intervene where we have the most control. In this case, that would have meant speeding up the Finance Approver — Stage 1. The data said look at Stage 2 instead, then look upstream at submission quality. VSA doesn’t just tell you where time is lost. It tells you where your instincts are pointing at the wrong thing. |

Takeaways

VSM and VSA are not tools for specialists. They’re tools for us regulars who run a process with observable data and wants to understand it honestly. Clarity can drive innovation.

My barrier used to be expertise and time. A proper VSM workshop required dedicated Lean practitioners and weeks of facilitation. While that is still true, we can at least run a lightweight analysis. AI doesn’t replace the methodology — it makes the data analysis and documentation layers accessible to practitioners who understand their process well but don’t have deep Lean credentials (and have the humility to know when to stop). Like me.

The methodology here is the same one I applied at GitLab building Customer Success Operations systems at scale. The environment is different. The constraints are more extreme. The discipline is identical.

If you’re building a process from scratch and haven’t yet run a VSM/VSA cycle on it, that’s the next step. And if you haven’t built the data infrastructure that makes it possible, that’s the step before that — which is what the companion post covers.

Scary, and cool.